Deep Learning in MATLAB

What Is Deep Learning?

Deep learning is a branch of machine learning that teaches computers to do what comes naturally to humans: learn from experience. Deep learning uses neural networks to learn useful representations of features directly from data. Neural networks combine multiple nonlinear processing layers, using simple elements operating in parallel and inspired by biological nervous systems. Deep learning models can achieve state-of-the-art accuracy in object classification, sometimes exceeding human-level performance.

Deep Learning Toolbox™ provides simple MATLAB® commands for creating and interconnecting the layers of a deep neural network. Examples and pretrained networks make it easy to use MATLAB for deep learning, even without knowledge of advanced computer vision algorithms or neural networks.

For a free hands-on introduction to practical deep learning methods, see Deep Learning Onramp. To quickly get started deep learning, see Try Deep Learning in 10 Lines of MATLAB Code.

Start Deep Learning Faster Using Transfer Learning

Transfer learning is commonly used in deep learning applications. You can take a pretrained network and use it as a starting point to learn a new task. Fine-tuning a network with transfer learning is much faster and easier than training from scratch. You can quickly make the network learn a new task using a smaller number of training images. The advantage of transfer learning is that the pretrained network has already learned a rich set of features that can be applied to a wide range of other similar tasks. For an interactive example, see Prepare Network for Transfer Learning Using Deep Network Designer. For a programmatic example, see Retrain Neural Network to Classify New Images.

To choose whether to use a pretrained network or create a new deep network, consider the scenarios in this table.

| Use a Pretrained Network for Transfer Learning | Create a New Deep Network | |

|---|---|---|

| Training Data | Hundreds to thousands of labeled data (small) | Thousands to millions of labeled data |

| Computation | Moderate computation (GPU optional) | Compute intensive (requires GPU for speed) |

| Training Time | Seconds to minutes | Days to weeks for real problems |

| Model Accuracy | Good, depends on the pretrained model | High, but can overfit to small data sets |

To explore a selection of pretrained networks, use Deep Network Designer.

Deep Learning Workflows

To learn more about deep learning application areas, see Applications.

| Domain | Example Workflow | Learn More | |

|---|---|---|---|

Image classification, regression, and processing |

| Apply deep learning to image data tasks. For example, use deep learning for image classification and regression. | Get Started with Transfer Learning Pretrained Deep Neural Networks Create Simple Deep Learning Neural Network for Classification |

Sequences and time series |

| Apply deep learning to sequence and time series tasks. For example, use deep learning for sequence classification and time series forecasting. | Sequence Classification Using Deep Learning Time Series Forecasting Using Deep Learning Compare Deep Learning and ARMA Models for Time Series Modeling |

Computer vision |

| Apply deep learning to computer vision applications. For example, use deep learning for semantic segmentation and object detection. | Get Started with Semantic Segmentation Using Deep Learning (Computer Vision Toolbox) Detect and Segment Objects (Computer Vision Toolbox) |

Audio processing |

| Apply deep learning to audio and speech processing applications. For example, use deep learning for speaker identification, speech command recognition, and acoustic scene recognition. | Deep Learning for Audio Applications (Audio Toolbox) |

Automated driving |

| Apply deep learning to automated driving applications. For example, use deep learning for vehicle detection and semantic segmentation. | Train a Deep Learning Vehicle Detector (Automated Driving Toolbox) |

Signal processing |

| Apply deep learning to signal processing applications. For example, use deep learning for waveform segmentation, signal classification, and denoising speech signals. | Classify Time Series Using Wavelet Analysis and Deep Learning |

Wireless communications |

| Apply deep learning to wireless communications systems. For example, use deep learning for positioning, spectrum sensing, autoencoder design, and digital predistortion (DPD). | Spectrum Sensing with Deep Learning to Identify 5G, LTE, and WLAN Signals (Communications Toolbox) Three-Dimensional Indoor Positioning with 802.11az Fingerprinting and Deep Learning (WLAN Toolbox) |

Reinforcement learning |

| Train deep neural network agents by interacting with an unknown dynamic environment. For example, use reinforcement learning to train policies to implement controllers and decision-making algorithms for complex applications such as resource allocation, robotics, and autonomous systems. | |

Computational finance |

| Apply deep learning to financial workflows. For example, use deep learning for applications including instrument pricing, trading, and risk management. | Compare Deep Learning Networks for Credit Default Prediction |

Lidar processing |

| Apply deep learning algorithms to process lidar point cloud data. For example, use deep learning for semantic segmentation, object detection on 3-D organized lidar point cloud data. | Aerial Lidar Semantic Segmentation Using PointNet++ Deep Learning |

Text analytics |

| Apply deep learning algorithms to text analytics applications. For example, use deep learning for text classification, language translation, and text generation. | |

| Predictive maintenance |

| Apply deep learning to predictive maintenance applications. For example, use deep learning for fault detection and remaining useful life estimation. | |

Deep Learning Apps

Process data, visualize and train networks, track experiments, and quantize networks interactively using apps.

You can process your data before training using apps to label ground truth data. For more information on choosing a labeling app, see Choose an App to Label Ground Truth Data.

| Name | Description | Learn More | |

|---|---|---|---|

| Deep Network Designer |

| Build, visualize, edit, and train deep learning networks. | Prepare Network for Transfer Learning Using Deep Network Designer |

| Time Series Modeler |

| Build, train, and compare AI models for time series prediction. | Compare Deep Learning and ARMA Models for Time Series Modeling Build Custom Network for Time Series Modeling of a Virtual Sensor |

| Experiment Manager |

| Create deep learning experiments to train networks under multiple initial conditions and compare the results. | Compare Classification Network Architectures Using Experiment Compare Dropout Probabilities and Filter Configurations for Image Regression Using Experiment |

| Deep Network Quantizer |

| Reduce the memory requirement of a deep neural network by quantizing weights, biases, and activations of convolution layers to 8-bit scaled integer data types. | |

| Reinforcement Learning Designer (Reinforcement Learning Toolbox) |

| Design, train, and simulate reinforcement learning agents. | Design and Train Agent Using Reinforcement Learning Designer (Reinforcement Learning Toolbox) |

| Image Labeler (Computer Vision Toolbox) |

| Label ground truth data in a collection of images. | Get Started with the Image Labeler (Computer Vision Toolbox) |

| Video Labeler (Computer Vision Toolbox) |

| Label ground truth data in a video, in an image sequence, or from a custom data source reader. | Get Started with the Video Labeler (Computer Vision Toolbox) |

| Ground Truth Labeler (Automated Driving Toolbox) |

| Label ground truth data in multiple videos, image sequences, or lidar point clouds. | Get Started with Ground Truth Labeling (Automated Driving Toolbox) |

| Lidar Labeler (Lidar Toolbox) |

| Label objects in a point cloud or a point cloud sequence. The app reads point cloud data from PLY, PCAP, LAS, LAZ, ROS and PCD files. | Get Started with the Lidar Labeler (Lidar Toolbox) |

| Signal Labeler (Signal Processing Toolbox) |

| Label signals for analysis or for use in machine learning and deep learning applications. | Use Signal Labeler App (Signal Processing Toolbox) |

Train Classifiers Using Features Extracted from Pretrained Networks

Feature extraction allows you to use the power of pretrained networks without investing time and effort into training. Feature extraction can be the fastest way to use deep learning. You extract learned features from a pretrained network, and use those features to train a classifier, for example, a support vector machine (SVM — requires Statistics and Machine Learning Toolbox™). For example, if an SVM trained using a SqueezeNet neural network can achieve over 90% accuracy on your training and validation set, then fine-tuning with transfer learning might not be worth the effort to gain some extra accuracy. If you perform fine-tuning on a small dataset, then you also risk overfitting. If the SVM cannot achieve good enough accuracy for your application, then fine-tuning is worth the effort to seek higher accuracy.

For an example, see Extract Image Features Using Pretrained Network.

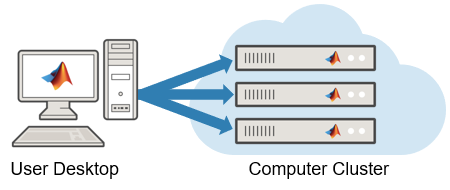

Deep Learning with Big Data on CPUs, GPUs, in Parallel, and on the Cloud

Training deep networks is computationally intensive and can take many hours of computing time; however, neural networks are inherently parallel algorithms. You can use Parallel Computing Toolbox™ to take advantage of this parallelism by running in parallel using high-performance GPUs and computer clusters. To learn more about deep learning in parallel, in the cloud, or using a GPU, see Scale Up Deep Learning in Parallel, on GPUs, and in the Cloud.

Datastores in MATLAB® are a convenient way of working with and representing collections of data that are too large to fit in memory at one time. To learn more about deep learning with large data sets, see Deep Learning with Big Data.

Deep Learning Using Simulink

Implement deep learning functionality in Simulink® models by using blocks from the Deep Neural Networks and Python Neural Networks block libraries, included in the Deep Learning Toolbox™, or by using the Deep Learning Object Detector block from the Analysis & Enhancement block library included in the Computer Vision Toolbox™.

For more information, see Deep Learning with Simulink.

| Block | Description |

|---|---|

| Classify data using a trained deep learning neural network | |

| Predict responses using a trained deep learning neural network | |

| Classify data using a trained deep learning recurrent neural network | |

| Predict responses using a trained recurrent neural network | |

Deep Learning Object Detector (Computer Vision Toolbox) | Detect objects using trained deep learning object detector |

Predict responses using pretrained Python® TensorFlow™ model | |

| Predict responses using pretrained Python PyTorch® model | |

| Predict responses using pretrained Python ONNX™ model | |

| Predict responses using pretrained custom Python model |

Deep Learning Interpretability

Deep learning networks are often described as "black boxes" because the reason that a network makes a certain decision is not always obvious. You can use interpretability techniques to translate network behavior into output that a person can interpret. This interpretable output can then answer questions about the predictions of a network.

The Deep Learning Toolbox provides several deep learning visualization methods to help

you investigate and understand network behavior. For example, gradCAM,

occlusionSensitivity, and imageLIME.

For more information, see Deep Learning Visualization Methods.

Deep Learning Customization

You can train and customize a deep learning model in various ways. For example, you

can build a network using built-in layers or define custom layers. You can then train

your network using the built-in training function trainnet or

use a custom training loop. You can also define a deep learning model as a function and

use a custom training loop. For help deciding which method to use, consult the following

table.

| Method | Use Case | Learn More |

|---|---|---|

| Built-in training and layers | Suitable for most deep learning tasks. |

|

| Custom layers | If Deep Learning Toolbox does not provide the layer you need for your task, then you can create a custom layer. | |

| Custom training loop | If you need additional customization, you can build and train your network using a custom training loop. |

For more information, see Train Deep Learning Model in MATLAB.

Deep Learning Import and Export

You can import neural networks from TensorFlow 2, TensorFlow-Keras, Keras 3, PyTorch, and the ONNX (Open Neural Network Exchange) model format. You can also export Deep Learning Toolbox neural networks to TensorFlow 2 and the ONNX model format.

Tip

You can import models from external platforms using the Deep Network Designer app. On import, the app shows an import report with details about any issues that require attention.

Import Functions

| External Deep Learning Platform and Model Format | Import Model as dlnetwork |

|---|---|

TensorFlow neural network, TensorFlow-Keras neural network in SavedModel format,

or Keras 3 neural network | Deep

Network Designer or importNetworkFromTensorFlow or importNetworkFromKeras |

Traced PyTorch model in a .pt file | Deep

Network Designer or importNetworkFromPyTorch |

| Neural network in ONNX model format | importNetworkFromONNX |

When importing a network, the software automatically generates custom layers when you import a model with TensorFlow layers, PyTorch layers, or ONNX operators that the software cannot convert to built-in MATLAB layers. The software saves the automatically generated custom layers to a package in the current folder. For more information, see Autogenerated Custom Layers.

Export Functions

| External Deep Learning Platform and Model Format | Export Neural Network or Layer Graph |

|---|---|

| TensorFlow 2 model in Python package | exportNetworkToTensorFlow |

| ONNX model format | exportONNXNetwork |

The exportNetworkToTensorFlow function saves a Deep Learning Toolbox neural network as a TensorFlow model in a Python package. For more information on how to load the exported model and save it in

SavedModel format, see Load Exported TensorFlow Model and Save TensorFlow Model.

By using ONNX as an intermediate format, you can interoperate with other deep learning frameworks that support ONNX model export or import.