Modeling and Prediction

Develop predictive models using topic models and word embeddings

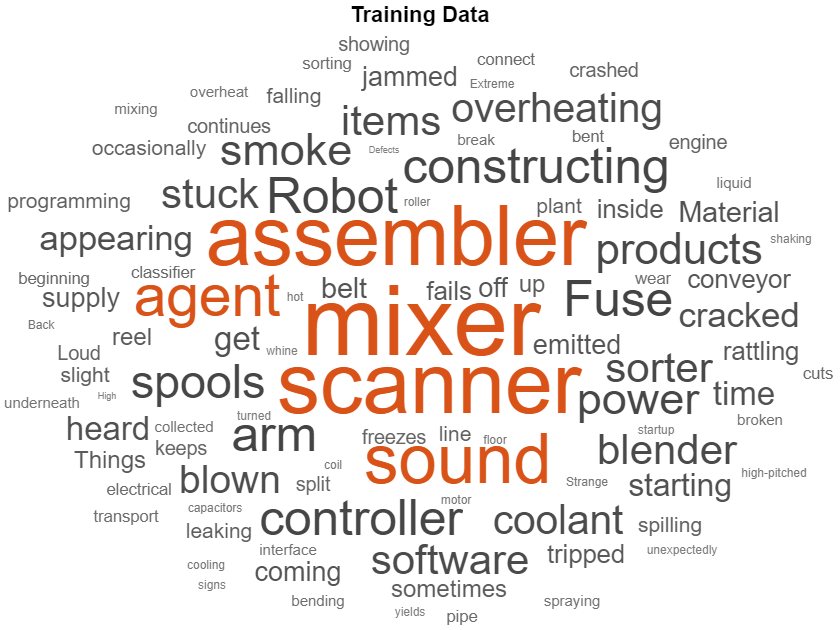

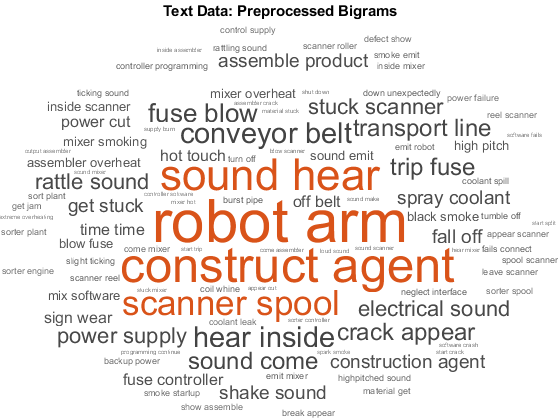

To find clusters and extract features from high-dimensional text datasets, you can use machine learning techniques and models such as LSA, LDA, and word embeddings. You can combine features created with Text Analytics Toolbox™ with features from other data sources. With these features, you can build machine learning models that take advantage of textual, numeric, and other types of data.

Functions

Topics

Classification and Modeling

- Create Simple Preprocessing Function

This example shows how to create a function which cleans and preprocesses text data for analysis using the Preprocess Text Data Live Editor task. - Create Simple Text Model for Classification

This example shows how to train a simple text classifier on word frequency counts using a bag-of-words model. - Classify Documents Using Document Embeddings

This example shows how to train a document classifier by converting documents to feature vectors using a document embedding. - Analyze Text Data Using Multiword Phrases

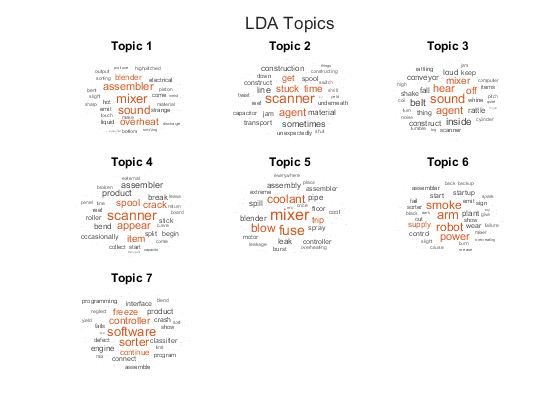

This example shows how to analyze text using n-gram frequency counts. - Analyze Text Data Using Topic Models

This example shows how to use the Latent Dirichlet Allocation (LDA) topic model to analyze text data. - Choose Number of Topics for LDA Model

This example shows how to decide on a suitable number of topics for a latent Dirichlet allocation (LDA) model. - Compare LDA Solvers

This example shows how to compare latent Dirichlet allocation (LDA) solvers by comparing the goodness of fit and the time taken to fit the model. - Visualize Document Clusters Using LDA Model

This example shows how to visualize the clustering of documents using a Latent Dirichlet Allocation (LDA) topic model and a t-SNE plot. - Visualize LDA Topic Correlations

This example shows how to analyze correlations between topics in a Latent Dirichlet Allocation (LDA) topic model. - Visualize Correlations Between LDA Topics and Document Labels

This example shows how to fit a Latent Dirichlet Allocation (LDA) topic model and visualize correlations between the LDA topics and document labels. - Train Custom Named Entity Recognition Model

This example shows how to train a custom named entity recognition (NER) model. - Create Co-occurrence Network

This example shows how to create a co-occurrence network using a bag-of-words model. - Information Retrieval with Document Embeddings

Learn about different types of document embeddings and how to use them for information retrieval. (Since R2024b) - Information Retrieval with Work Orders Data

This example shows how to use information retrieval techniques to find solutions for new work orders based on past actions taken and descriptions from work orders. (Since R2023b) - Train BERT Document Classifier

This example shows how to train a BERT neural network for document classification. (Since R2023b)

Sentiment Analysis and Keyword Extraction

- Sentiment Analysis in MATLAB

Learn about sentiment analysis techniques. (Since R2023b) - Analyze Sentiment in Text

This example shows how to use the Valence Aware Dictionary and sEntiment Reasoner (VADER) algorithm for sentiment analysis. - Generate Domain Specific Sentiment Lexicon

This example shows how to generate a lexicon for sentiment analysis using 10-K and 10-Q financial reports. - Train a Sentiment Classifier

This example shows how to train a classifier for sentiment analysis using an annotated list of positive and negative sentiment words and a pretrained word embedding. - Extract Keywords from Text Data Using RAKE

This example shows how to extract keywords from text data using Rapid Automatic Keyword Extraction (RAKE). - Extract Keywords from Text Data Using TextRank

This example shows to extract keywords from text data using TextRank.

Deep Learning

- Classify Text Data Using Deep Learning

This example shows how to classify text data using a deep learning long short-term memory (LSTM) network. - Classify Text Data Using Convolutional Neural Network

This example shows how to classify text data using a convolutional neural network. - Classify Out-of-Memory Text Data Using Deep Learning

This example shows how to classify out-of-memory text data with a deep learning network using a transformed datastore. - Sequence-to-Sequence Translation Using Attention

This example shows how to convert decimal strings to Roman numerals using a recurrent sequence-to-sequence encoder-decoder model with attention. - Multilabel Text Classification Using Deep Learning

This example shows how to classify text data that has multiple independent labels. - Generate Text Using Deep Learning (Deep Learning Toolbox)

This example shows how to train a deep learning long short-term memory (LSTM) network to generate text. - Pride and Prejudice and MATLAB

This example shows how to train a deep learning LSTM network to generate text using character embeddings. - Word-by-Word Text Generation Using Deep Learning

This example shows how to train a deep learning LSTM network to generate text word-by-word. - Classify Text Data Using Custom Training Loop

This example shows how to classify text data using a deep learning bidirectional long short-term memory (BiLSTM) network with a custom training loop. - Generate Text Using Autoencoders

This example shows how to generate text data using autoencoders. - Define Text Encoder Model Function

This example shows how to define a text encoder model function. - Define Text Decoder Model Function

This example shows how to define a text decoder model function. - Language Translation Using Deep Learning

This example shows how to train a German to English language translator using a recurrent sequence-to-sequence encoder-decoder model with attention. - Extract Answers from Documents Using BERT

This example shows how to modify and fine-tune a pretrained BERT model for extractive question answering. (Since R2024b) - Out-of-Distribution Detection for BERT Document Classifier

Detect out-of-distribution (OOD) data in a BERT document classifier. (Since R2024b) - Out-of-Distribution Detection for LSTM Document Classifier

Detect out-of-distribution (OOD) data in an LSTM document classifier. (Since R2024a)

Language Support

- Language Considerations

Information on using Text Analytics Toolbox features for other languages. - Japanese Language Support

Information on Japanese support in Text Analytics Toolbox. - Analyze Japanese Text Data

This example shows how to import, prepare, and analyze Japanese text data using a topic model. - German Language Support

Information on German support in Text Analytics Toolbox. - Analyze German Text Data

This example shows how to import, prepare, and analyze German text data using a topic model.