ClassificationSVM

Support vector machine (SVM) for one-class and binary classification

Description

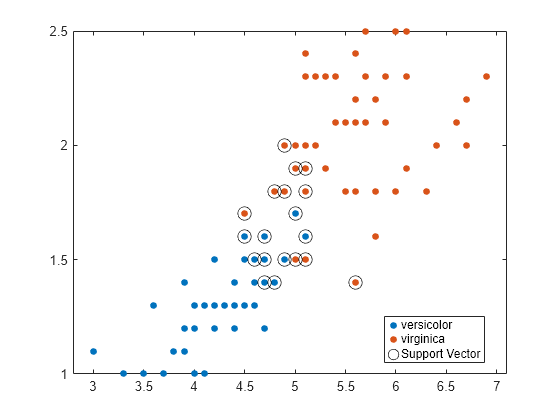

ClassificationSVM is a support vector machine (SVM) classifier for one-class and two-class learning. Trained ClassificationSVM classifiers store training data, parameter values, prior probabilities, support vectors, and algorithmic implementation information. Use these classifiers to perform tasks such as fitting a score-to-posterior-probability transformation function (see fitPosterior) and predicting labels for new data (see predict).

Creation

Create a ClassificationSVM object by using fitcsvm.

Properties

Object Functions

compact | Reduce size of machine learning model |

compareHoldout | Compare accuracies of two classification models using new data |

crossval | Cross-validate machine learning model |

discardSupportVectors | Discard support vectors for linear support vector machine (SVM) classifier |

edge | Find classification edge for support vector machine (SVM) classifier |

fitPosterior | Fit posterior probabilities for support vector machine (SVM) classifier |

gather | Gather properties of Statistics and Machine Learning Toolbox object from GPU |

incrementalLearner | Convert binary classification support vector machine (SVM) model to incremental learner |

lime | Local interpretable model-agnostic explanations (LIME) |

loss | Find classification error for support vector machine (SVM) classifier |

margin | Find classification margins for support vector machine (SVM) classifier |

partialDependence | Compute partial dependence |

plotPartialDependence | Create partial dependence plot (PDP) and individual conditional expectation (ICE) plots |

predict | Classify observations using support vector machine (SVM) classifier |

resubEdge | Resubstitution classification edge |

resubLoss | Resubstitution classification loss |

resubMargin | Resubstitution classification margin |

resubPredict | Classify training data using trained classifier |

resume | Resume training support vector machine (SVM) classifier |

shapley | Shapley values |

testckfold | Compare accuracies of two classification models by repeated cross-validation |

Examples

More About

Algorithms

For the mathematical formulation of the SVM binary classification algorithm, see Support Vector Machines for Binary Classification and Understanding Support Vector Machines.

NaN,<undefined>, empty character vector (''), empty string (""), and<missing>values indicate missing values.fitcsvmremoves entire rows of data corresponding to a missing response. When computing total weights (see the next bullets),fitcsvmignores any weight corresponding to an observation with at least one missing predictor. This action can lead to unbalanced prior probabilities in balanced-class problems. Consequently, observation box constraints might not equalBoxConstraint.If you specify the

Cost,Prior, andWeightsname-value arguments, the output model object stores the specified values in theCost,Prior, andWproperties, respectively. TheCostproperty stores the user-specified cost matrix (C) without modification. ThePriorandWproperties store the prior probabilities and observation weights, respectively, after normalization. For model training, the software updates the prior probabilities and observation weights to incorporate the penalties described in the cost matrix. For details, see Misclassification Cost Matrix, Prior Probabilities, and Observation Weights.Note that the

CostandPriorname-value arguments are used for two-class learning. For one-class learning, theCostandPriorproperties store0and1, respectively.For two-class learning,

fitcsvmassigns a box constraint to each observation in the training data. The formula for the box constraint of observation j iswhere C0 is the initial box constraint (see the

BoxConstraintname-value argument), and wj* is the observation weight adjusted byCostandPriorfor observation j. For details about the observation weights, see Adjust Prior Probabilities and Observation Weights for Misclassification Cost Matrix.If you specify

Standardizeastrueand set theCost,Prior, orWeightsname-value argument, thenfitcsvmstandardizes the predictors using their corresponding weighted means and weighted standard deviations. That is,fitcsvmstandardizes predictor j (xj) usingwhere xjk is observation k (row) of predictor j (column), and

Assume that

pis the proportion of outliers that you expect in the training data, and that you set'OutlierFraction',p.For one-class learning, the software trains the bias term such that 100

p% of the observations in the training data have negative scores.The software implements robust learning for two-class learning. In other words, the software attempts to remove 100

p% of the observations when the optimization algorithm converges. The removed observations correspond to gradients that are large in magnitude.

If your predictor data contains categorical variables, then the software generally uses full dummy encoding for these variables. The software creates one dummy variable for each level of each categorical variable.

The

PredictorNamesproperty stores one element for each of the original predictor variable names. For example, assume that there are three predictors, one of which is a categorical variable with three levels. ThenPredictorNamesis a 1-by-3 cell array of character vectors containing the original names of the predictor variables.The

ExpandedPredictorNamesproperty stores one element for each of the predictor variables, including the dummy variables. For example, assume that there are three predictors, one of which is a categorical variable with three levels. ThenExpandedPredictorNamesis a 1-by-5 cell array of character vectors containing the names of the predictor variables and the new dummy variables.Similarly, the

Betaproperty stores one beta coefficient for each predictor, including the dummy variables.The

SupportVectorsproperty stores the predictor values for the support vectors, including the dummy variables. For example, assume that there are m support vectors and three predictors, one of which is a categorical variable with three levels. ThenSupportVectorsis an n-by-5 matrix.The

Xproperty stores the training data as originally input and does not include the dummy variables. When the input is a table,Xcontains only the columns used as predictors.

For predictors specified in a table, if any of the variables contain ordered (ordinal) categories, the software uses ordinal encoding for these variables.

For a variable with k ordered levels, the software creates k – 1 dummy variables. The jth dummy variable is –1 for levels up to j, and +1 for levels j + 1 through k.

The names of the dummy variables stored in the

ExpandedPredictorNamesproperty indicate the first level with the value +1. The software stores k – 1 additional predictor names for the dummy variables, including the names of levels 2, 3, ..., k.

All solvers implement L1 soft-margin minimization.

For one-class learning, the software estimates the Lagrange multipliers, α1,...,αn, such that

References

[2] Scholkopf, B., J. C. Platt, J. C. Shawe-Taylor, A. J. Smola, and R. C. Williamson. “Estimating the Support of a High-Dimensional Distribution.” Neural Comput., Vol. 13, Number 7, 2001, pp. 1443–1471.