compareHoldout

Compare accuracies of two classification models using new data

Syntax

Description

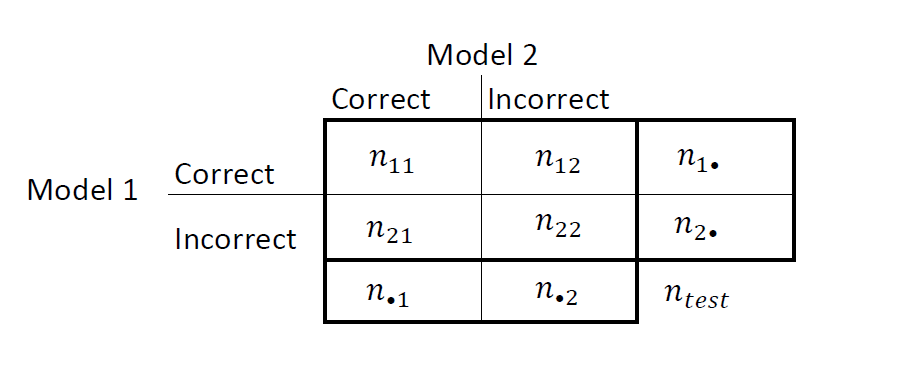

compareHoldout statistically assesses the accuracies of

two classification models. The function first compares their predicted labels against

the true labels, and then it detects whether the difference between the

misclassification rates is statistically significant.

You can determine whether the accuracies of the classification models differ or

whether one model performs better than another. compareHoldout can

conduct several McNemar test variations,

including the asymptotic test, the exact-conditional test, and the

mid-p-value test. For cost-sensitive assessment, available tests include a chi-square test

(requires Optimization Toolbox™) and a likelihood ratio test.

h = compareHoldout(C1,C2,T1,T2,ResponseVarName)C1 and C2 have

equal accuracy for predicting the true class labels in the

ResponseVarName variable. The alternative hypothesis is

that the labels have unequal accuracy.

The first classification model C1 uses the predictor data

in T1, and the second classification model

C2 uses the predictor data in T2.

The tables T1 and T2 must contain the

same response variable but can contain different sets of predictors. By default,

the software conducts the mid-p-value McNemar test to compare

the accuracies.

h = 1 indicates rejecting the null

hypothesis at the 5% significance level. h =

0 indicates not rejecting the null hypothesis at the 5%

level.

The following are examples of tests you can conduct:

Compare the accuracies of a simple classification model and a model that is more complex by passing the same set of predictor data (that is,

T1=T2).Compare the accuracies of two potentially different models using two potentially different sets of predictors.

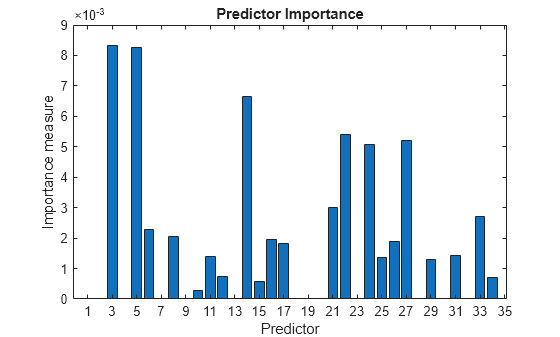

Perform various types of Feature Selection. For example, you can compare the accuracy of a model trained using a set of predictors to the accuracy of one trained on a subset or different set of those predictors. You can choose the set of predictors arbitrarily, or use a feature selection technique such as PCA or sequential feature selection (see

pcaandsequentialfs).

h = compareHoldout(C1,C2,T1,T2,Y)C1 and C2 have

equal accuracy for predicting the true class labels Y. The

alternative hypothesis is that the labels have unequal accuracy.

The first classification model C1 uses the predictor data

T1, and the second classification model

C2 uses the predictor data T2. By

default, the software conducts the mid-p-value McNemar test

to compare the accuracies.

h = compareHoldout(C1,C2,X1,X2,Y)C1 and C2 have

equal accuracy for predicting the true class labels Y. The

alternative hypothesis is that the labels have unequal accuracy.

The first classification model C1 uses the predictor data

X1, and the second classification model

C2 uses the predictor data X2. By

default, the software conducts the mid-p-value McNemar test

to compare the accuracies.

h = compareHoldout(___,Name,Value)

Examples

Input Arguments

Name-Value Arguments

Output Arguments

Limitations

compareHoldoutdoes not compare ECOC models composed of linear or kernel classification models (that is,ClassificationLinearorClassificationKernelmodel objects). To compareClassificationECOCmodels composed of linear or kernel classification models, usetestcholdoutinstead.Similarly,

compareHoldoutdoes not compareClassificationLinearorClassificationKernelmodel objects. To compare these models, usetestcholdoutinstead.

More About

Tips

One way to perform cost-insensitive feature selection is:

Train the first classification model (

C1) using the full predictor set.Train the second classification model (

C2) using the reduced predictor set.Specify

X1as the full test-set predictor data andX2as the reduced test-set predictor data.Enter

compareHoldout(C1,C2,X1,X2,Y,'Alternative','less'). IfcompareHoldoutreturns1, then there is enough evidence to suggest that the classification model that uses fewer predictors performs better than the model that uses the full predictor set.

Alternatively, you can assess whether there is a significant difference between the accuracies of the two models. To perform this assessment, remove the

'Alternative','less'specification in step 4.compareHoldoutconducts a two-sided test, andh = 0indicates that there is not enough evidence to suggest a difference in the accuracy of the two models.Cost-sensitive tests perform numerical optimization, which requires additional computational resources. The likelihood ratio test conducts numerical optimization indirectly by finding the root of a Lagrange multiplier in an interval. For some data sets, if the root lies close to the boundaries of the interval, then the method can fail. Therefore, if you have an Optimization Toolbox license, consider conducting the cost-sensitive chi-square test instead. For more details, see

CostTestand Cost-Sensitive Testing.

Alternative Functionality

To directly compare the accuracy of two sets of class labels

in predicting a set of true class labels, use testcholdout.